October 4, 2018

Imagine being able to unlock your phone just by using your face, no fingerprint scanning or touching required. It would just work, automagically and flawlessly without any user intervention. Wouldn’t that be great?

Well, guess what… someone already made this happen. It’s called the iPhone X and you might just be using it already. But what’s even better: the potential for using face recognition for user authentication is much bigger than this! In the not-so-distant future we’ll hopefully be able to rent a car, sign legal documents and do everything else just by showing our unique facial features.

We’re already starting to see it with certain services requiring ID verification (like banking and other types of transactional systems). In this case, the provided legal data is cross-checked with data on the ID and face image on a document is compared to the owner’s face. However, like most new technologies it introduces new possibilities of breach. And one of the most popular ways to deceive a face recognition mechanism, is a ‘face spoof’ attack.

- A spoofing attack is an attempt to acquire someone else’s privileges or access rights by using a photo, video or a different substitute for an authorized person’s face. Some examples of attacks that come to mind:

- Print attack: The attacker uses someone’s photo. The image is printed or displayed on a digital device.

- Replay/video attack: A more sophisticated way to trick the system, which usually requires a looped video of a victim’s face. This approach ensures behaviour and facial movements to look more ‘natural’ compared to holding someone’s photo.

- 3D mask attack: During this type of attack, a mask is used as the tool of choice for spoofing. It’s an even more sophisticated attack than playing a face video. In addition to natural facial movements, it enables ways to deceive some extra layers of protection such as depth sensors.

Spoofing Detection Approach

Some form of security should become standard in all facial recognition based systems. There are many different approaches to tackle this challenge. The most popular anti-spoofing state-of-the-art solutions include:

- Face liveness detection: A mechanism based on analysis of how ‘alive’ a test face is. This is usually done by checking eye movement, such as blinking and face motion.

- Contextual information techniques: By investigating the surroundings of the image, we can try detecting if there was a digital device or photo paper in the scanned area.

- Texture analysis: Here small texture parts of the input image are probed in order to find patterns in spoofed and real images.

- User interaction: By asking the user to perform an action (turning head left/right, smiling, blinking eyes) the machine can detect if the action has been performed in a natural way which resembles human interaction.

And of course we can’t ignore the elephant in the room, FaceID on the iPhone X. In the latest hardware iteration, Apple has introduced advanced depth-mapping and 3D-sensing techniques which enable spoofing detection with unprecedented accuracy. However, as this high-end hardware will not be available on the majority of consumer devices in the near future, we think it makes sense to double-down on what’s possible with existing 2D cameras.

In fact, during our research and implementation we found out that it’s possible to achieve an extremely high level of real-time spoofing detection with a medium-quality 2D camera. The secret? Using a Deep Learning solution with a custom neural network.

We validated our approach by cross-checking it with existing, documented approaches.

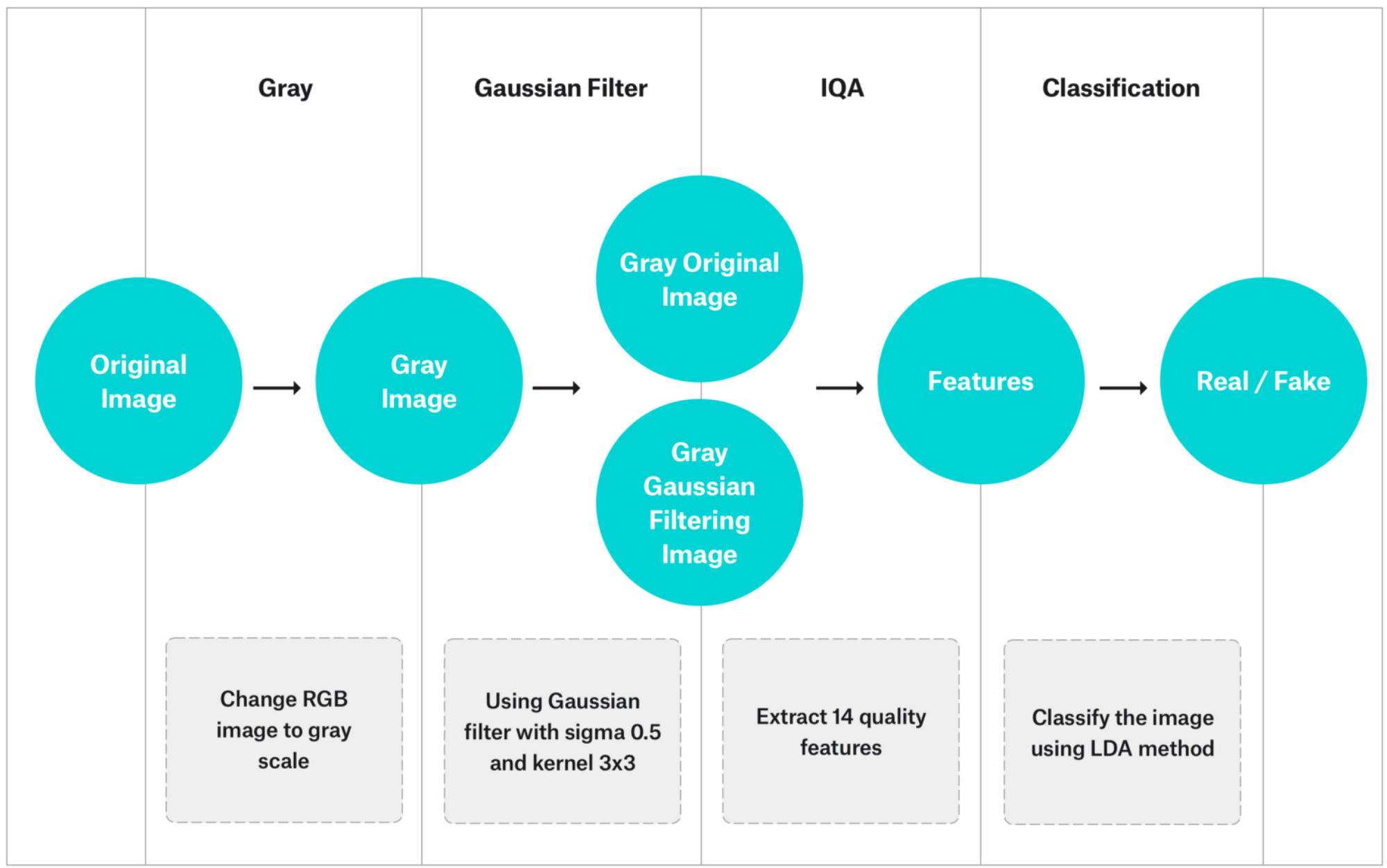

Cross-check 1: Image Quality Assessment

This solution is based on comparing the original image with an image processed with Gaussian filtering. Author of the paper [1] is proving that differences between fake images vary from real ones and it could be detected automatically. In order to do that, we’re extracting 14 popular image quality features such as: Mean Squared Error, Average Difference or Total Edge/Corner Difference. The next step is to send them to classifier in order to determine if it’s a ‘real’ face or ‘fake’ face.

Four different features (specular reflection, blurriness, chromatic moment and color diversity) are sent for classification. The classifier is built with multiple models, each of them trained on a different type of spoofing attack vector.

The philosophers have only interpreted the world, in various ways. The point, however, is to change it.

Karl Marx

What’s Next?

The presented, state-of-the-art solution only works with 2D replay/video attacks. In order to increase resistance to more types of attacks, the DNN model could be tweaked by extending training data with paper-printed attack examples. Additionally, 3D spoofing attempts could be handled by additional sensors (for example depth).

Security is a constantly evolving matter, since attackers keep finding new ways to breach the system as soon as new protection methods are being introduced. But we think our unique approach could already be applied to all processes involving automatic (or semi-automatic) KYC validation to decrease the number of fraudulent accounts, or at the very least reduce the amount of manual labor (final validation) required.

[1] Face Anti-Spoofing Based on General Image Quality Assessment, Javier Galbally, Sébastien Marcel

[2] Face Spoof Detection with Image Distortion Analysis, Di Wen, Hu Han,Anil K. Jain

[3] Biometric Antispoofing Methods: A Survey in Face Recognition, Javier Galbally, Sébastien Marcel, Julian Fierrez

This post was written by Artur Baćmaga, one of YND’s AI experts. With over 6 years of experience in Python, Artur works as a ML/Python Developer at YND. He leads AI-powered processes for projects such as Car Detection & SmartBar. In need of some brain power? Reach out to us via hello@ynd.co with your questions about ML/AI projects.